There are a lot of questions about how AI content detection works.

At Originality.ai, our AI detector has achieved 99% accuracy (Lite) and 99%+ accuracy (Turbo); check out our AI detector accuracy review and a meta-analysis of AI detection studies for more insights.

So, how does AI detection work behind the scenes?

Our engineering team provide the nitty-gritty details in this guide on how they trained the most accurate AI content detection tool.

Note: this article was first published using a previous model. Check out our guide on AI detection accuracy and learn about the best AI detector model for your use case to get insight into the latest developments at Originality.ai!

Research has shown that it is increasingly difficult for humans to identify whether a piece of text was created by a writer or AI-generated (try it for yourself with this quiz).

As the generating models get bigger and bigger, the quality of the generated text continues to improve rapidly.

Therefore, the use of a Machine Learning (ML) model for detection (ML-based detection) is an urgent problem.

However, detecting the synthesis document is still a difficult task.

The adversary can use datasets outside the training set distribution or use different sampling techniques to generate text, and many other techniques. Through extensive research, we did our best to deliver an optimal model in terms of architecture, data, and other techniques.

The tool's core is based on a modified version of the BERT model.

Also known as Bidirectional Encoder Representations from Transformers, BERT is a Google-developed artificial intelligence (AI) system for processing natural language.

We tested on multiple model architectures and concluded that with the same capacity, the discriminate model with having a more flexible architecture than the generative model (e.g bidirectional) makes it more powerful in detection.

Another thing we learned was that the larger the model used, the more accurate and robust the prediction will be. The usage model is large enough, yet still meets the response time, thanks to the most modern deployment techniques.

This is one of the reasons our AI detection tool can outperform any alternative.

We did not use BERT to train the model. We trained a pre-training language model with a completely new architecture, which is still a kind of architecture based on Transformer.

First, we trained a new language model that had a completely new architecture based on 160GB of text data.

One technique that we apply to train the model is similar to ELECTRA. The model is trained by two models: the generator and the discriminator. After training, the discriminator is used as our language model.

Next, we fine-tune the model on the training dataset that we built up to millions of samples.

The quality of the model depends on the input data.

Through the collection and processing (including the cleaning process) of a large amount of actual text from a variety of sources, we have a large amount of real text to train.

With generated text (in addition to using the best pre-trained generated models available today), we also build on that by fine-tuning with the existing real text dataset to make the generated text more natural. That way, it is as close to the real distribution text as possible, so that the model can learn the boundary for future inference connections.

Our training dataset is carefully generated using various sampling methods and is frequently manually reviewed by humans.

To generate text, there are many different sampling techniques, like temperature, Top-K, and nucleus sampling.

We expect that more advanced generation strategies (such as nucleus sampling) could make detection more difficult than generations produced via Top-K truncation.

Using a mixed dataset from different sampling techniques also helps to increase the accuracy of the model.

In our training data generation, we generate data in different ways and on many models, so that the data is diversified and the model better understands the types of text generated by AI.

The Challenges: One of the challenges of the detection model is detecting difficult short texts.

Although in very short texts, we have also improved the accuracy of the model in these cases by using our different techniques.

Therefore, the current model has improved accuracy on shorter texts. According to our experiments, with text lengths of 50 tokens or longer, the model has acceptable reliability.

AI is constantly evolving, with increasingly complex AI models being released regularly.

In 2025 alone, we’ve seen the release of models such as GPT-5, DeepSeek, and Claude 4 Sonnet and Claude 4 Opus.

Given the release of new and complex text-generation models, AI detectors require regular updating.

The model needs to be retested and evaluated before we decide whether to use its results or retrain it using the data from those new text-generation models.

However, with tests using the most recent SOTA models, the model we created can do its job very well.

Taking our proven model training method and applying it to other languages was a key priority on our roadmap. In 2025, we announced an expansion to our multilingual model, which now detects AI writing in 30 languages.

At the time of first writing this article, another one of our goals was to include sentence/paragraph-level highlighting for AI vs. human-written copy. That’s now an integral part of our AI detection tool!

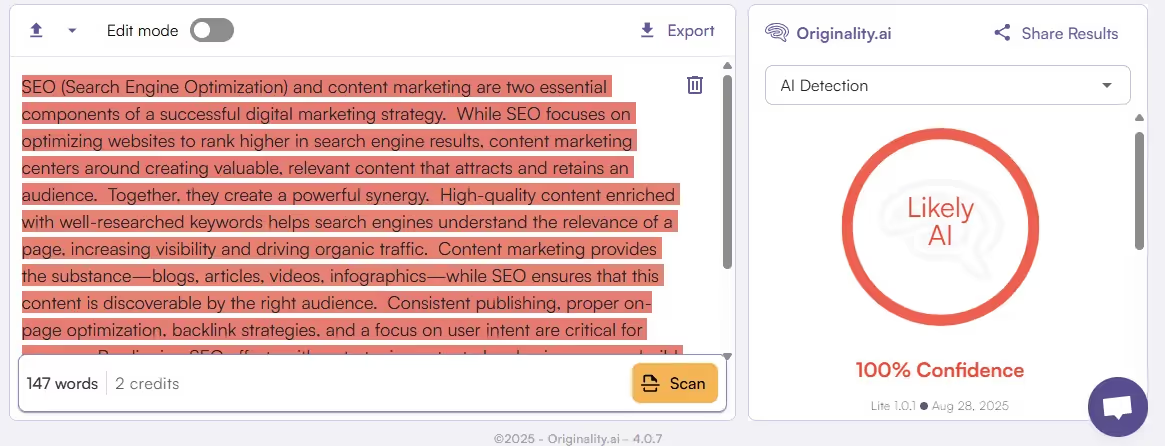

Check out an example below:

To demonstrate sentence-level highlighting, we first prompted ChatGPT to generate 150 words about SEO and content marketing.

Then, we scanned that text with the Originality.ai AI Checker:

The text was entirely generated by ChatGPT, and our AI Checker confidently predicted that it was 100% Likely AI.

On a sentence level, this means that the AI-generated text has dark-red highlighting.

If the text were original, it would show up with green highlighting.

Let’s take a human-written sample from one of our blog posts on GEO.

You can see in the screenshot below that the scan depicts the text in shades of green to indicate the % of confidence that it is human-written.

The model is trained on a million pieces of textual data labeled “human-generated” or “AI-generated.”

Then, during testing, we test the trained model on documents generated by popular artificial intelligence models.

Our AI detection models have industry-leading accuracy:

Find out which AI detection model is best for your use case.

Resources on AI Detection Accuracy:

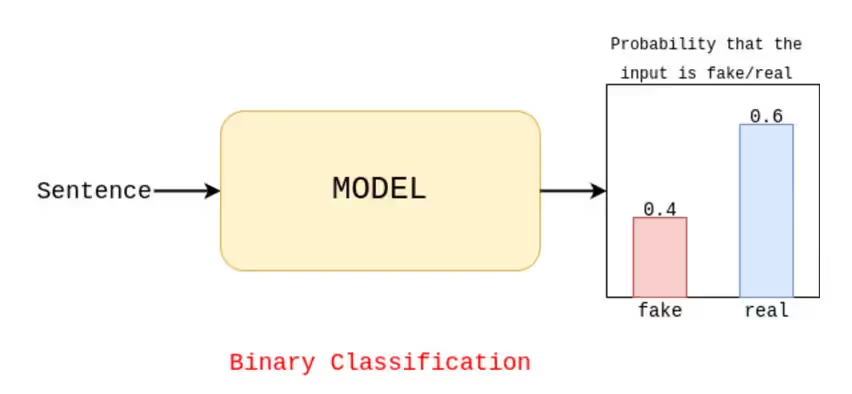

This is a binary classification problem because the objective is to determine whether the sentence was produced by AI.

After training, the model takes text as input and determines whether it is likely to have been generated by AI or not.

In order to determine the final outcome (or AI confidence score), we must first select a threshold. Then, if the probability result of the AI-generated input sentence exceeds that threshold, the output is fake.

Let’s take a look at an example:

The AI detection model estimates a 0.6 probability of human-written content (noted as ‘real’ in the image below) and a 0.4 probability of AI-generated content (noted as ‘fake’ in the image below).

If we use a threshold of 0.5, the output will be a human-written input text because 0.6 > 0.5.

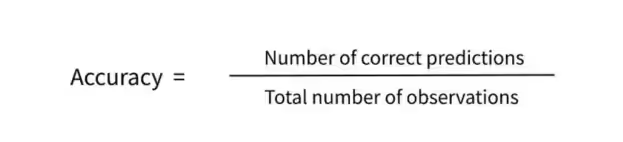

To evaluate the model, we use the accuracy measure of the label it predicts compared to the true label of the data. Informally, accuracy is the fraction of predictions our model got right.

What does this look like when you run an Originality.ai AI Detection scan?

Let’s go back to that ChatGPT-generated text sample. After running the text through the scan, the model predicted it was 100% Likely AI.

So, right beside the text input, you can clearly see the AI detection score.

Although many other metrics are also taken to test and evaluate the model more specifically, in detail, and more accurately, we hope this quick snapshot of how AI detection works provides insight into the technical details that power AI-detection at Originality.ai.

Try the most accurate AI Checker today!

Have a question? Reach out to our exceptional support team.

Learn about AI Detection and AI Detection Accuracy:

Learn about the impact of AI across industries: