‘Tis the season for self-improvement, and thousands of holiday gift-seekers will search Amazon for books about motivation, self-help, and success. Some will stumble upon a non-fiction series called “New Year, True You”, comprising five books by author Noah Felix Bennett, including titles such as New Year, Real You and From Resolutions to Results, all posted at $11.99 per paperback (at the time of writing this study).

Remarkably, Bennett published all five in the span of just three days, between Sept. 29 and Oct 1, 2025.

And that’s not the half of it. Bennett has, in fact, published 74 books on Amazon, all between May and October 2025, on self-help topics including porn addiction, solo parenting, and toxic workplaces. (He even has a series of relationship-advice books, including How to Play with Your Wife’s Mind: Emotional Secrets to Keep Your Wife Devoted, Passionate, and Deeply Connected and How to Play with Your Husband’s Mind: Emotional Tactics to Keep Your Husband Curious, Loving, and Engaged; and, adhering to the old adage of “Create a problem, sell a solution,” he has also penned a book called Toxic Love: How to Break Free from an Emotionally Abusive Relationship.)

Bennett can produce content at an inhumanly prolific rate because he is one of hundreds of authors we’ve found in Amazon’s “Success” subgenre, likely using artificial intelligence to write and sell books for mass consumption.

We at Originality.ai conducted a study of 844 books in Amazon’s “Success” subcategory, a division of its broader “Self-Help” genre, all published within a three-month span, between Aug. 31 and Nov. 28.

In this broad subcategory—beyond popular bestsellers by Napoleon Hill, Mel Robbins, and Michelle Obama—consumers can find a dizzying amount of motivational content published multiple times a day, the vast majority of which is likely written by AI.

Not only is this damaging for Amazon’s brand and unfair to buyers unwittingly purchasing generic AI content, but it creates a significant mountain of AI-generated self-help slop that real authors must slog through in order to have their voices heard and make some money off their labour.

When analyzing book pages on Amazon, we scan three elements: the product description (also known as a summary), which is the main sales copy on any given page; the author bio; and the sample text (also known as a preview).

Originality.ai requires a minimum of 100 words, so many author bios—and some summaries or samples—do not meet our requirements. Those fields are left blank or marked as N/A.

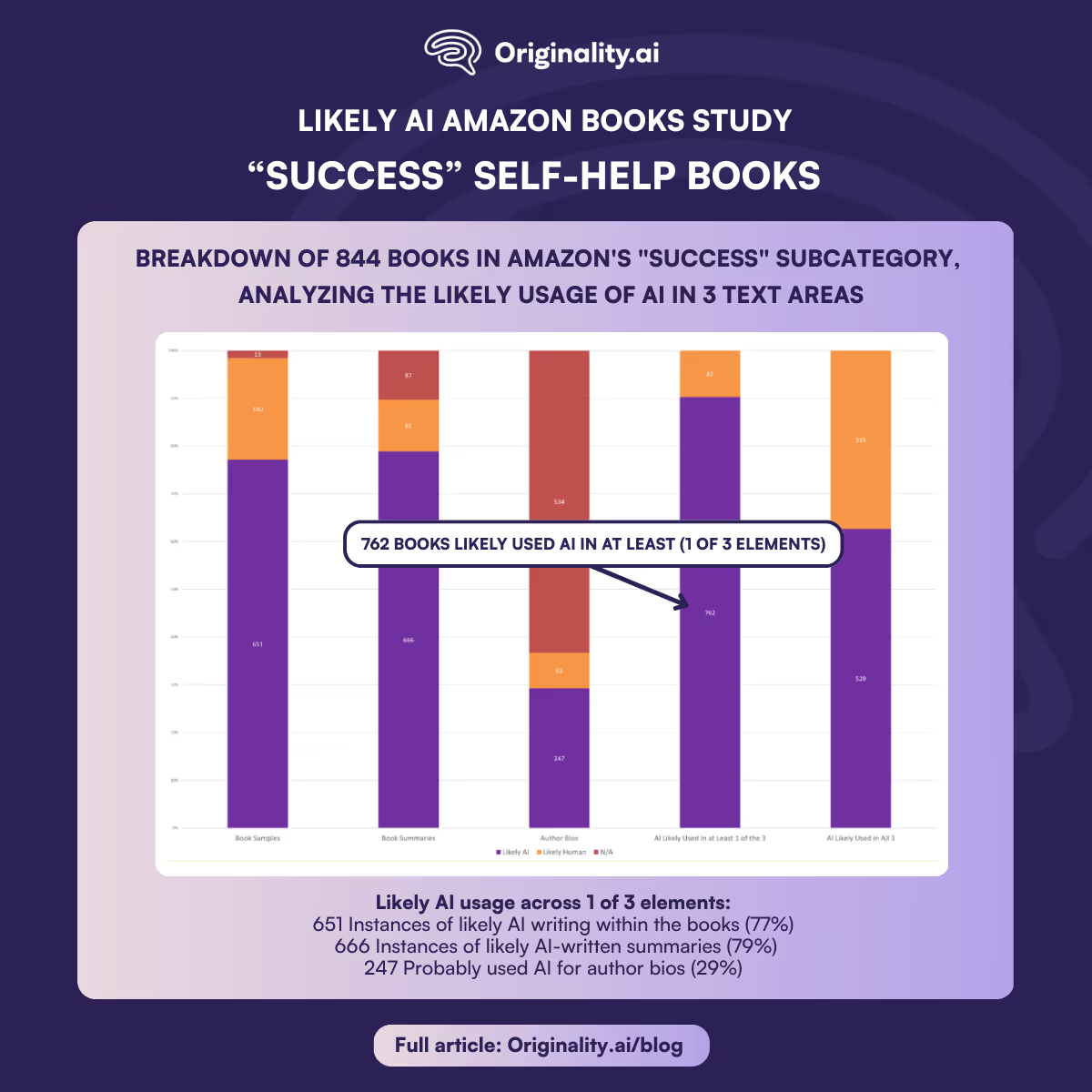

Of the 844 titles we scanned, 762 books—90%—likely used AI in at least one of those three elements.

A roughly equal number likely used AI to write both summaries and the books themselves. We found 666 instances of likely AI-written summaries (79%), and a slightly lower number of likely AI writing within the books—651 (77%).

The vast majority of authors (534, or 63%) did not have author bios, or else their bios fell below our 100-word minimum requirement. Of the remaining authors who did publish scannable bios, 247 (29% of the total dataset) probably used AI to write their bios, while only 63 (8%) likely wrote their bios themselves.

In addition to scanning all available text, we checked certain metrics, including the paperback cost in USD, the number of reviews, the number of pages, and the publication date.

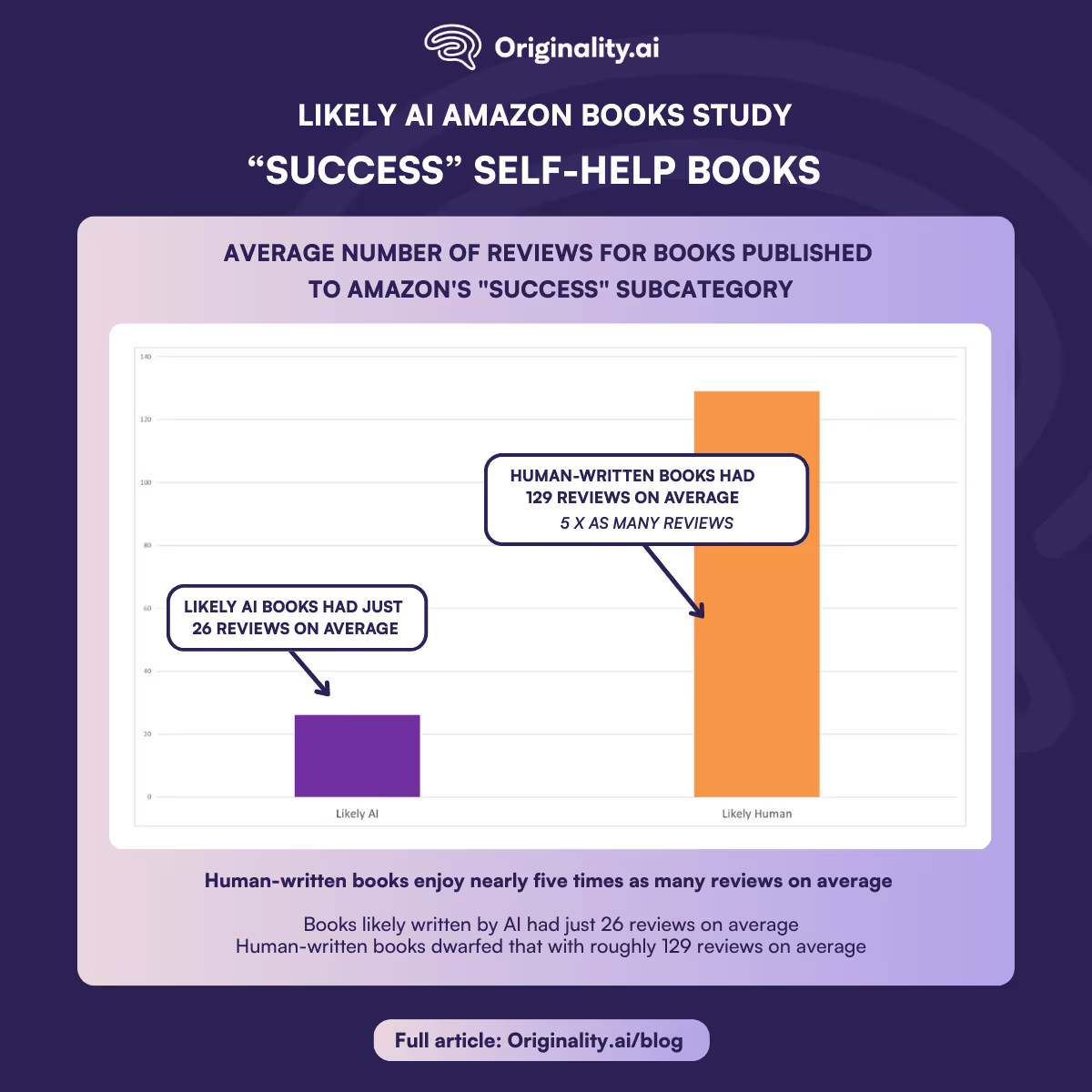

Of these metrics, the number of reviews stood out as a significant difference between likely AI and likely human writing.

Books likely written by AI had just 26 reviews on average, but human-written books dwarfed that with roughly 129 reviews on average—meaning human-written books enjoy nearly five times as many reviews on average.

Digging into the numbers, there is a clear explanation for this.

The human-written books are outweighed significantly by a few republished classic books, mostly in the public domain, which have been “curated and formatted” for modern distribution. Someone named Hanson Dean is behind several of the most popular examples, including Wallace D. Wattles’ 1910 work The Science of Getting Rich—which includes lessons Dean no doubt took to heart, garnering more than 10,000 Amazon reviews by claiming his version as a “Premium Classic Edition” and slapping a copyright claim on the “modern layout, typography and cover design.”

Setting aside the ethics of capitalizing on the public-domain work of a long-dead Socialist, those few titles outweigh everything by, for example, the earlier-referenced Noah Felix Bennett, whose books have one or two reviews apiece.

Yet even if we were to arbitrarily omit every book with more than 700 reviews—the top 13 titles in this dataset, when sorted by reviews—human-written books would still enjoy more than double the number of reviews, averaging 17 reviews to just seven for likely AI content.

The data is clear: more reviews, more likely to be written by a real person. That’s a positive sign in a world of increasing AI slop.

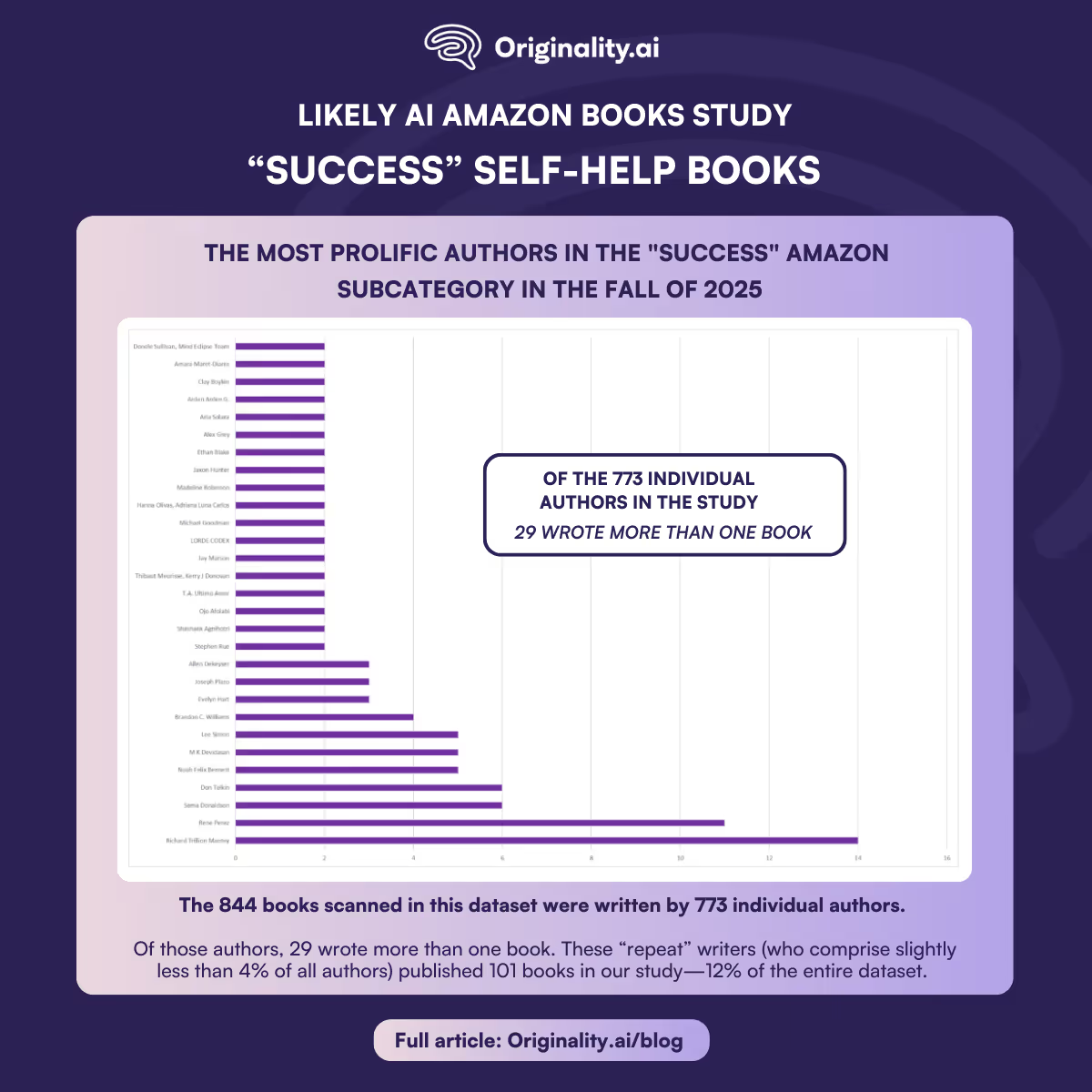

The 844 books scanned in this dataset were written by 773 individual authors. Of those authors, 29 wrote more than one book. These “repeat” writers (who comprise slightly less than 4% of all authors) published 101 books in our study—12% of the entire dataset.

Most repeaters stuck to two books. Some published five books or more. All of the 4% of repeat writers likely used AI to publish so frequently.

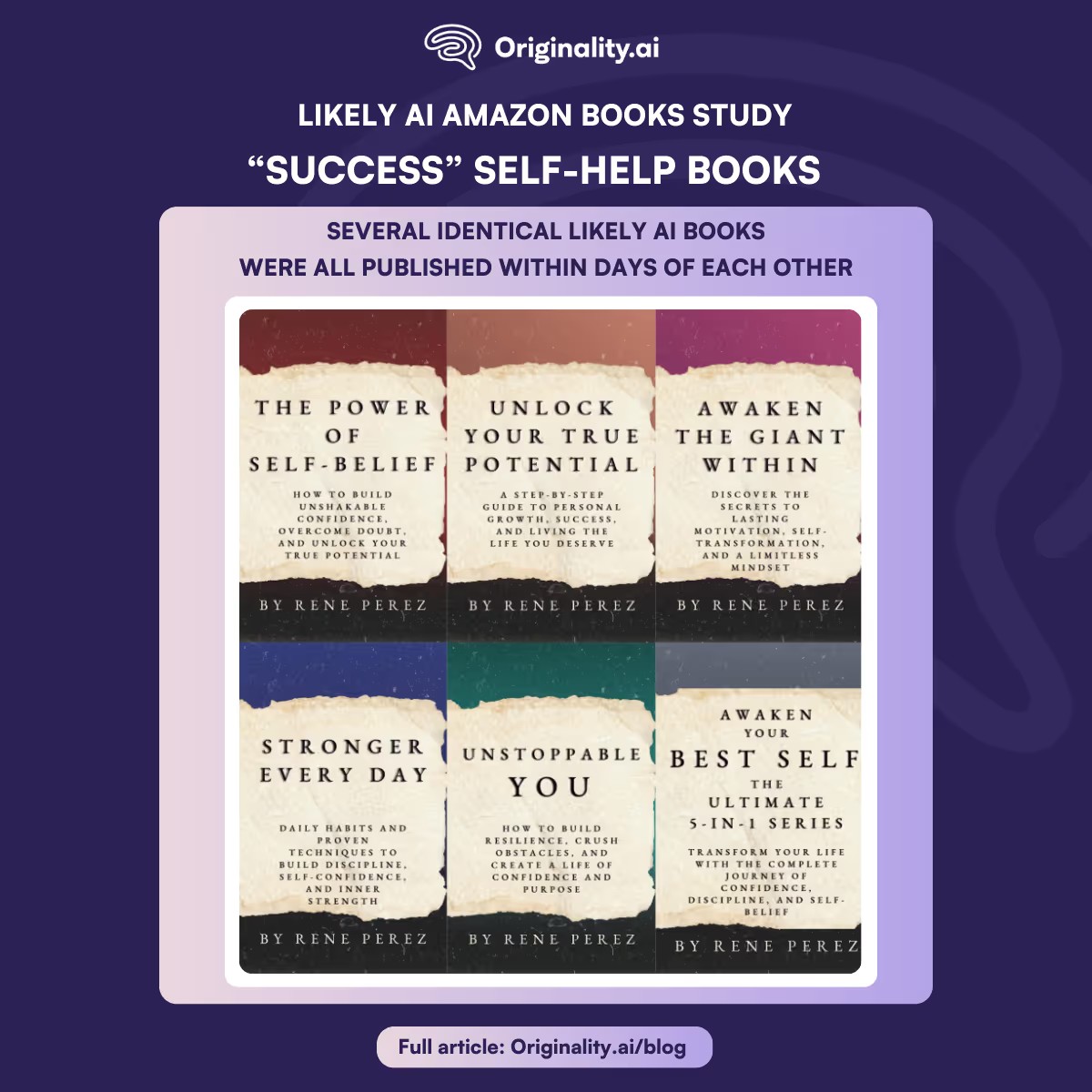

One of the most extreme examples is Rene Perez, who published a hefty series of motivational books in the span of just three days.

To be fair, while other deceptive Amazon subcategories contain significant numbers of outright fake authors, the “Success” subcategory more often features real people who simply use AI to write their books. Shout-out to Richard Trillion Mantey, far and away the most prolific author in this dataset (14 books published in three months! A fraction of the 397 books to his name as of early December 2025), who—despite likely using AI in every book we scanned—also does podcast interviews, and isn’t afraid to put his real name and face out there.

There’s an important distinction worth making between strangers who hide behind AI-generated names and fake photos, and real people with less-than-fluent English skills using AI to write under their own names. Not every author who uses AI is the same.

The “Success” book subcategory is broad, encompassing topics such as wealth, relationships, mental health, business development, and personal empowerment.

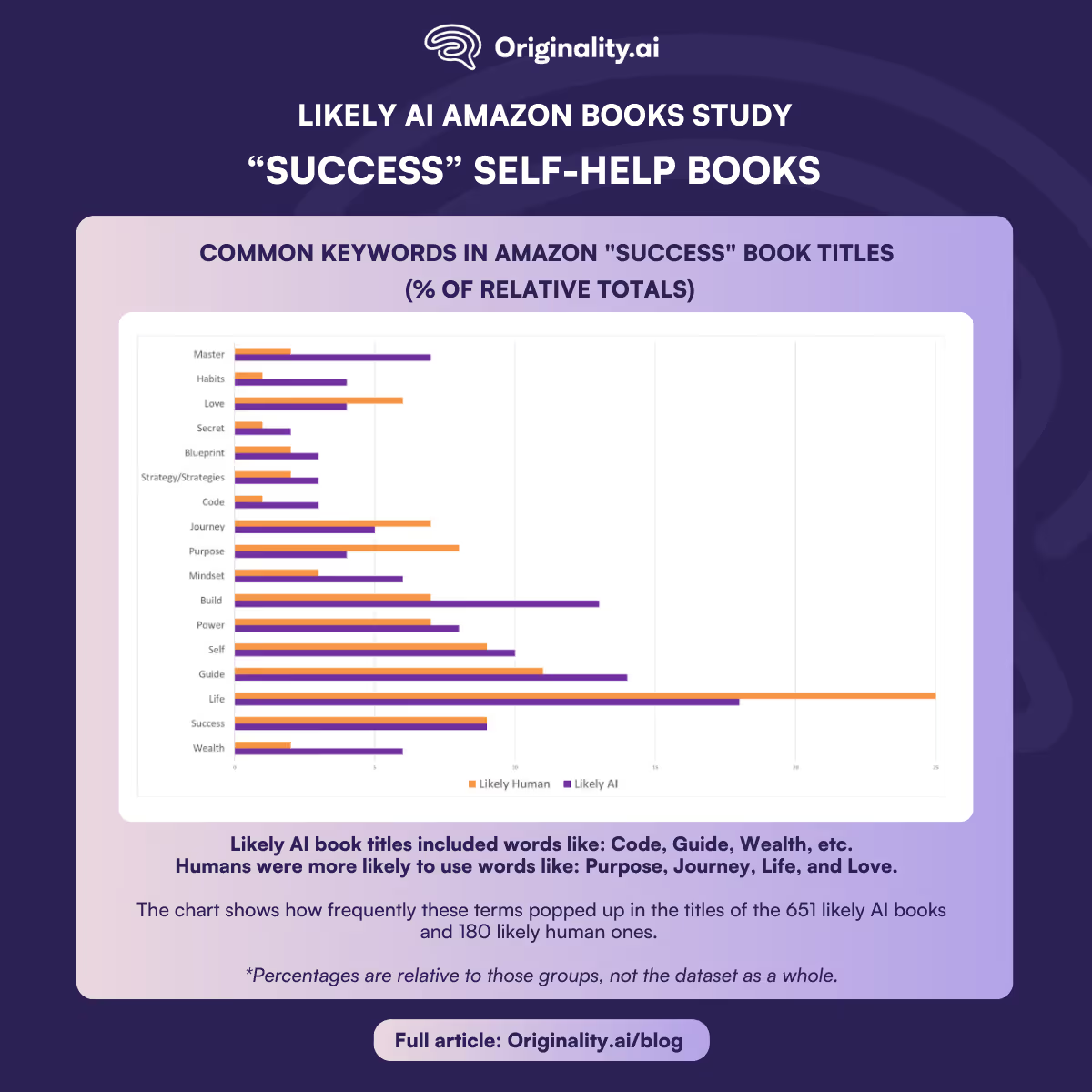

Within likely AI content, common keywords emerge—and they tend to lack emotion.

Likely AI book titles more often included words like “Code”, “Guide”, “Wealth”, “Build”, “Secret”, “Strategies”, “Master”, “Blueprint”, “Habits”, and “Mindset”— neutral terms pitching tips and tools to readers.

Humans, on the other hand, were more likely to use grandiose and emotional words, such as “Purpose”, “Journey”, “Life”, and “Love”.

The chart below shows how frequently these terms popped up in the titles of the 651 likely AI books and 180 likely human ones. (Percentages are relative to those groups, not the dataset as a whole.)

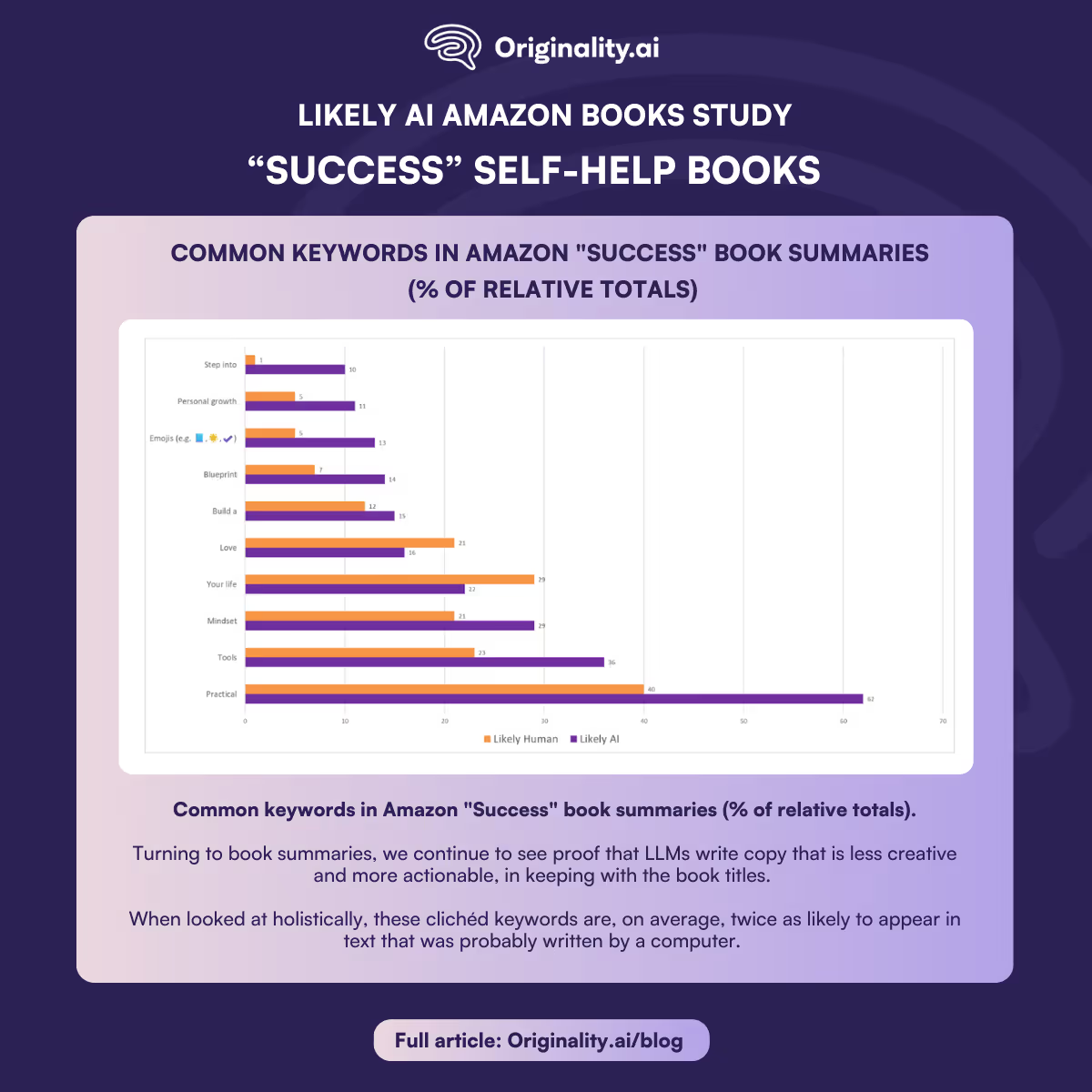

Turning to book summaries, we continue to see proof that LLMs write copy that is less creative and more actionable, in keeping with the book titles.

When looked at holistically, these clichéd keywords are, on average, twice as likely to appear in text that was probably written by a computer.

To better understand this data, we looked at the absolute counts of each term, then determined their values relative to their totals. Our dataset included 666 likely AI-written summaries, compared to just 91 written by humans, and a further 87 that didn’t meet our minimum word count.

In keeping with humans’ preferred emotional language, “Love” and “Your life” both appear relatively more often in human-written summaries. They were used by probable LLMs 104 and 147 times, respectively, representing 16% and 22% of that group; this is in contrast with their usage by humans (19 and 26 instances, or 21% and 29%, respectively).

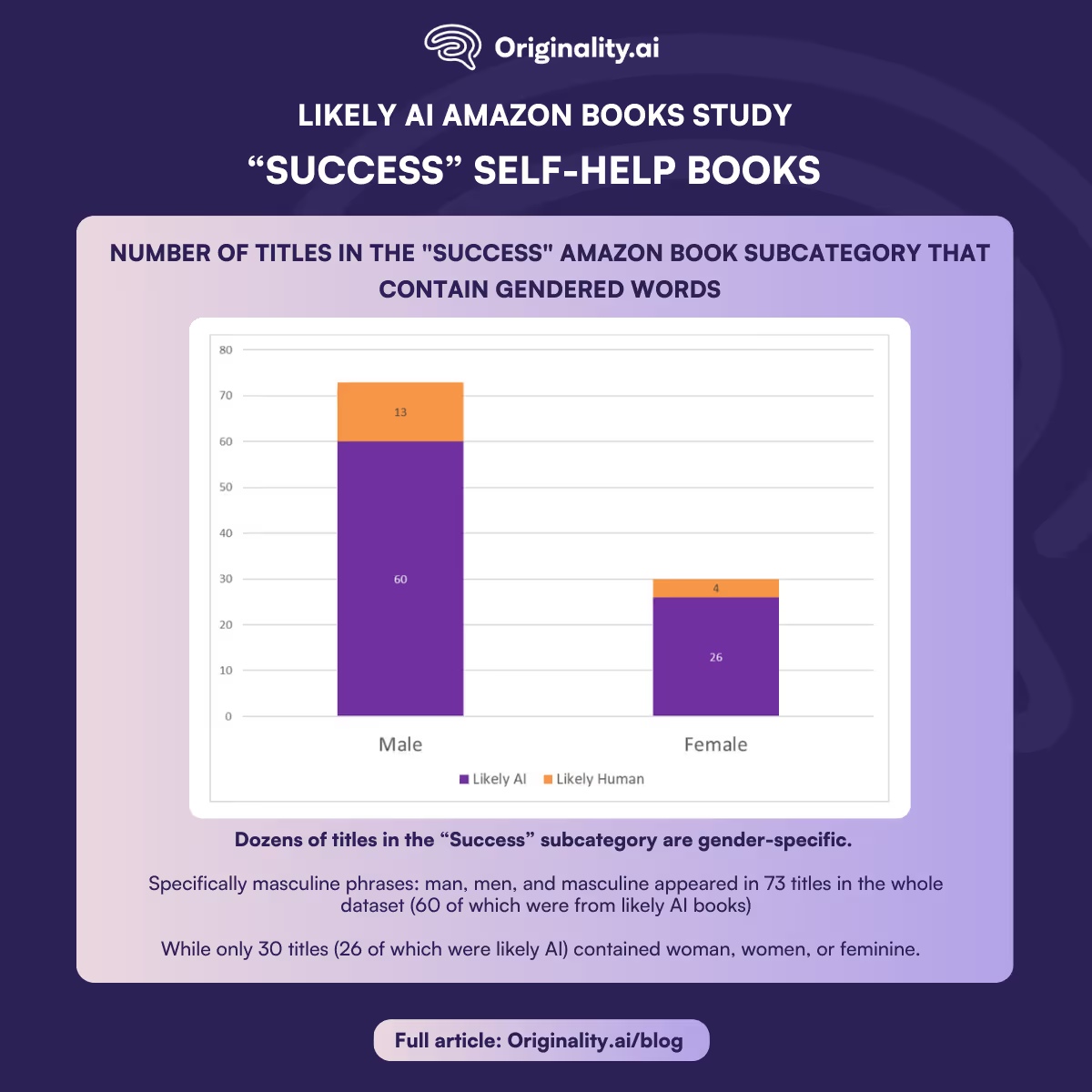

While not the most prominent theme, dozens of titles in the “Success” subcategory are gender-specific. Specifically masculine phrases such as “man”, “men”, and “masculine” appeared in 73 titles in the whole dataset (60 of which were from likely AI books), while only 30 titles (26 of which were likely AI) contained “woman”, “women”, or “feminine”.

Unlike some other “Self-Help” subcategories, the “Success” field clearly skews male—themes including “mastering” one’s life, “building” their career, creating strong habits and blueprints for success, gaining wealth and power, etc.

This above data analysis is only a snapshot of the broader reality, as we only scanned for specific keywords (e.g. literally “man” and “men”), meaning we did not count, for example, “Scars to Strength — 22 Laws of Self-Mastery: The Blueprint to Reign Over Your Life”, which is obviously—but only implicitly—male-coded.

Female-oriented titles also deal largely with success in business, but the market simply appears to be less than half the size.

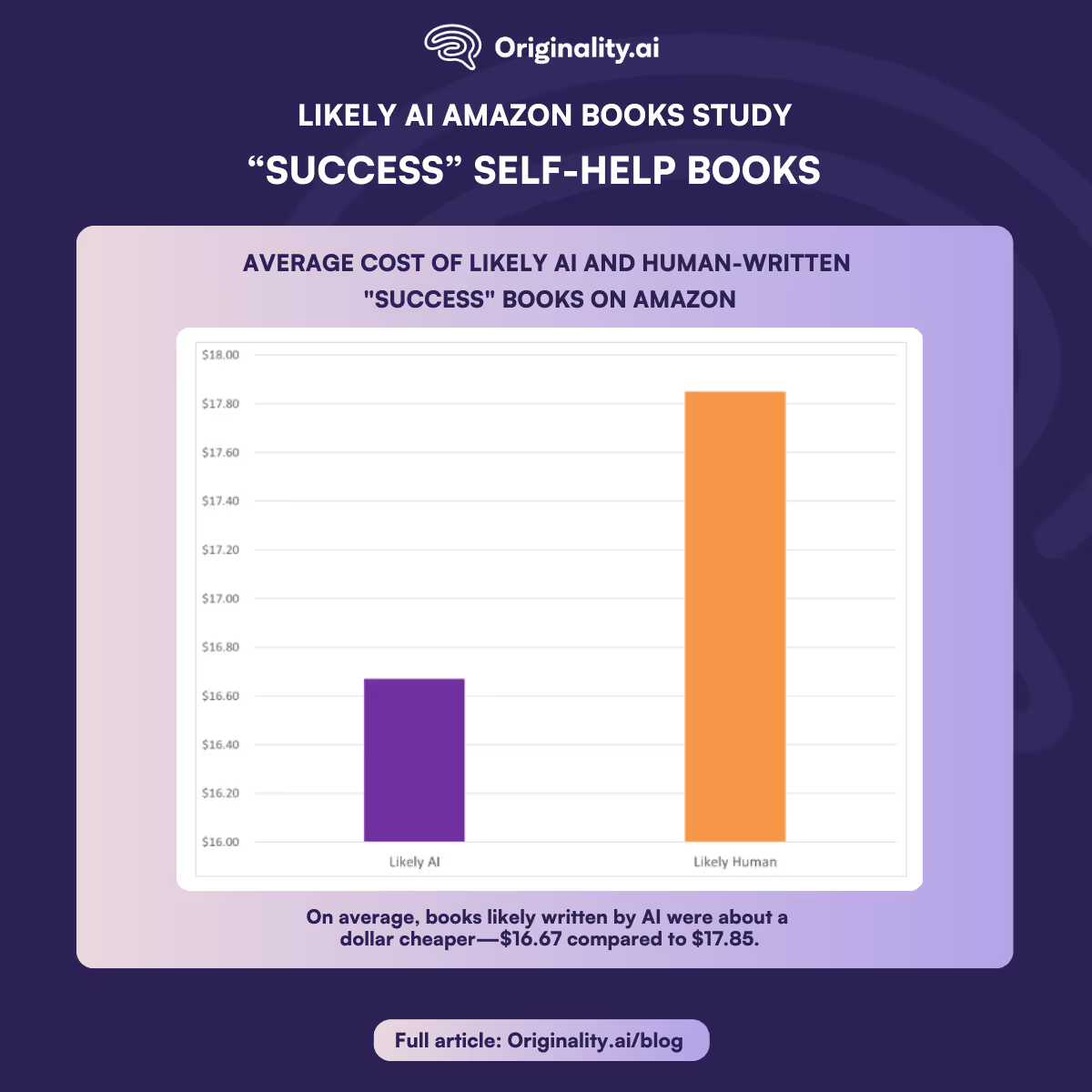

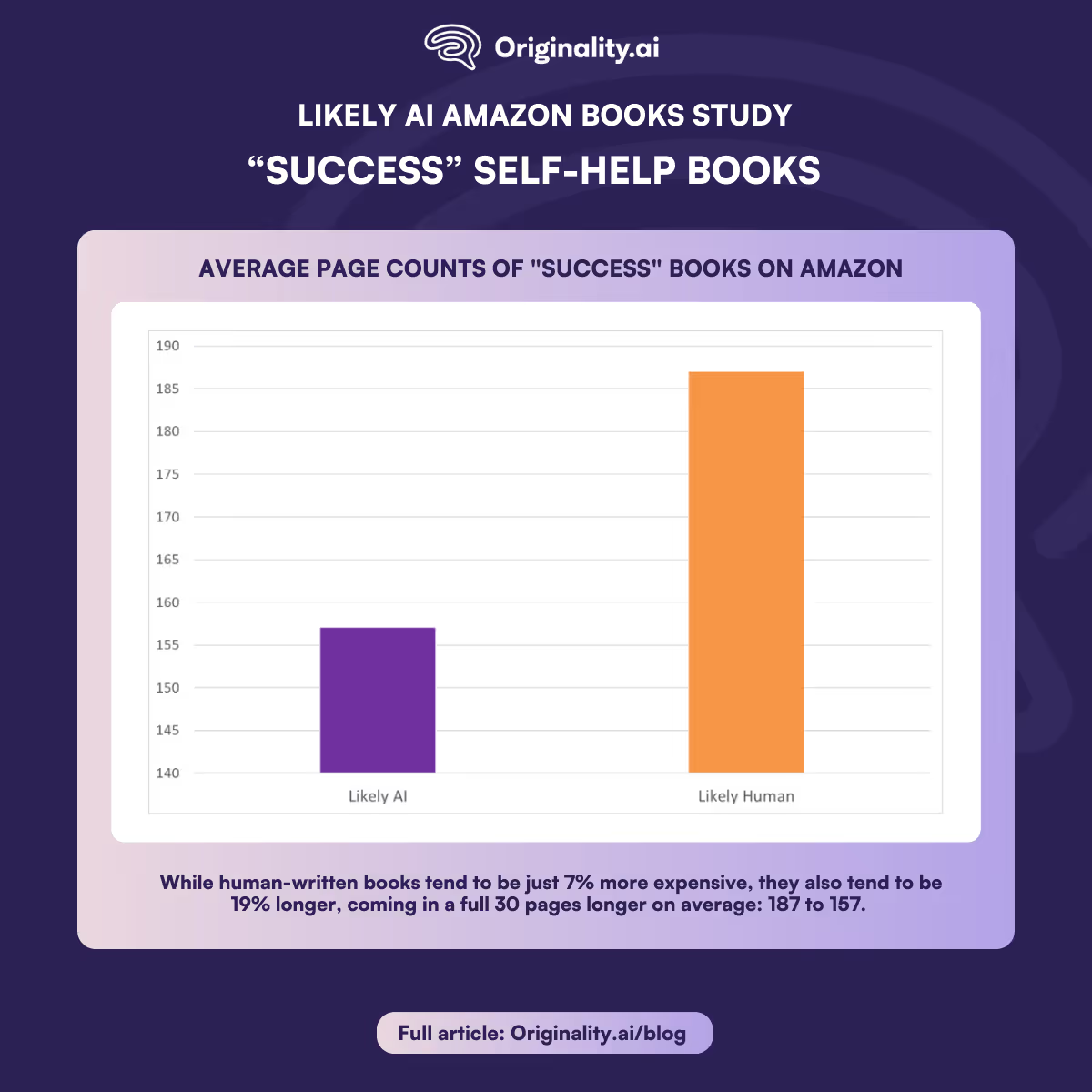

As previously discovered in a separate analysis of probable AI content on Amazon, LLMs tend to write shorter books than humans do—and their publishers tend to charge less as a result. Findings from this study corroborate that.

On average, books likely written by AI were about a dollar cheaper—$16.67 compared to $17.85.

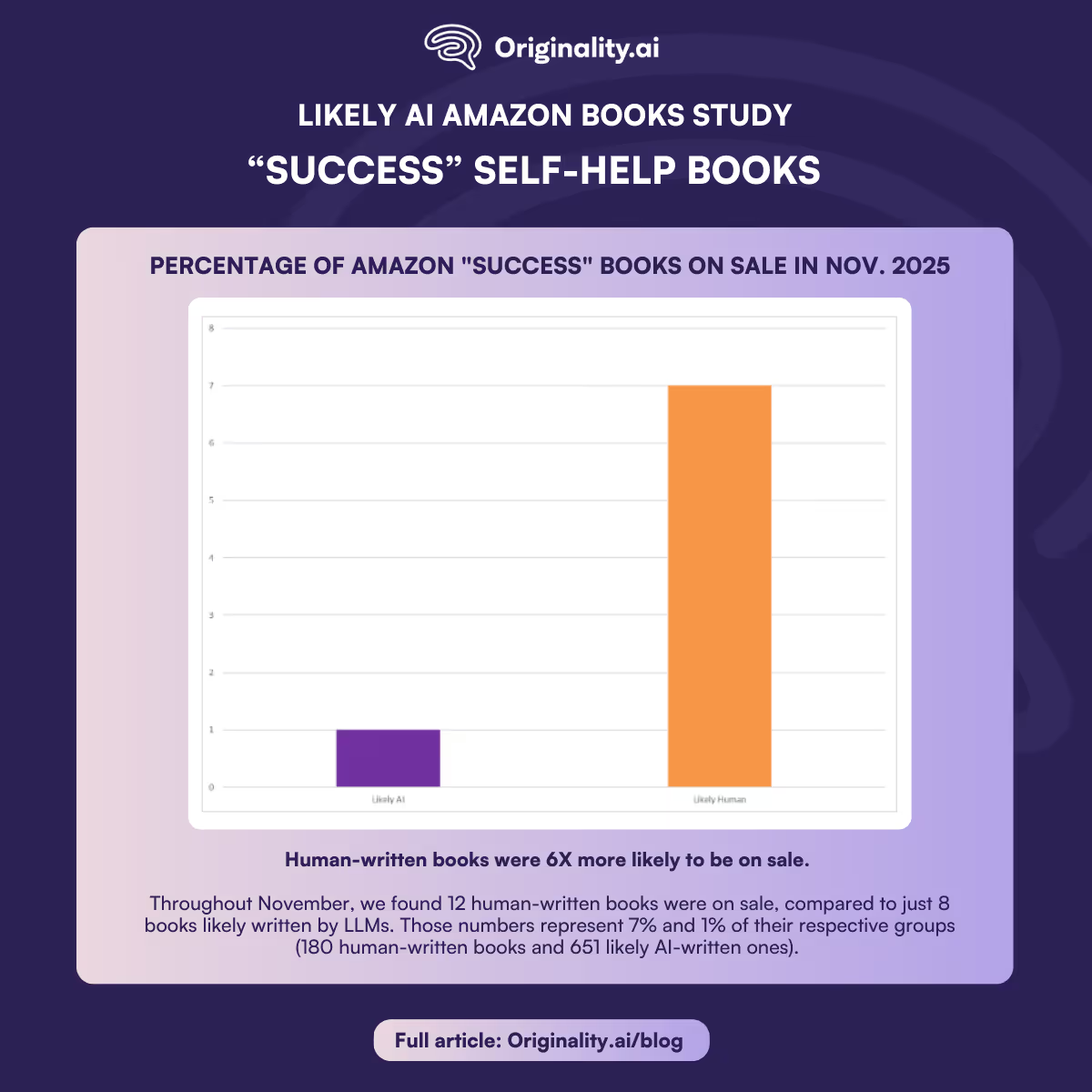

Also corroborating our previous findings, human-written books were six times more likely to be on sale. The sample size is small and ever-shifting—sales come and go daily—but when we scanned these 844 books throughout November, we found 12 human-written books were on sale, compared to just eight books likely written by LLMs. Those numbers represent 7% and 1% of their respective groups (180 human-written books and 651 likely AI-written ones).

While human-written books tend to be just 7% more expensive, they also tend to be 19% longer, coming in a full 30 pages longer on average: 187 to 157.

To summarize these numbers, humans will charge a little bit more—but will more than offset that with additional content.

Self-help has been a skyrocketing category over the last decade. Unit sales of self-help books grew at a compound annual growth rate of 11% from 2013-2019, according to a report by NPD Group in 2020, culminating in 18.6 million units sold in 2019.

Then came the pandemic, where mental health and personal well-being took another leap, with January 2021 seeing booming sales of print books, largely thanks to renewed interest in the mindfulness and self-help categories.

AI has taken this to another level. Self-help books are often more personal and can easily be less researched—fertile ground for LLMs to spout off empowering maxims. When looking for guidance, everyday people are already turning to LLMs independently—the impact that AI has had over industries such as consulting and therapy, for better or worse, is well-documented.

From that perspective, why should anyone want to pay $11.99 for a book whose contents you could get for free?

Books—even self-published ones—carry the implicit promise of legitimacy and authority. In this category, male-oriented tips for “mastering your emotions” and bogus stories of self-fulfillment are diluting the pool and taking advantage of Amazon’s inability to catch or unwillingness to crack down on unlabelled AI-generated books.

Ironically, one of the 844 books in this dataset is called How to Write for Humans in an AI World: Cutting Through Digital Noise and Reaching Real People.

In it, the author laments the proliferation of AI-written content:

“The words we see online, in our inboxes, even in news articles, often feel like they were written by no one in particular,” he writes. “They’re grammatically perfect and emotionally empty. They’re fluent, but soulless. The irony is that we’ve never written more than we do today. We’re producing mountains of content: posts, captions, pitches, texts, and endless emails. At the same time, in the midst of all that noise, something essential is fading. It’s the sense that a real person is speaking to another real person.”

That book’s contents were flagged as likely AI-generated.

For this study, we scanned every available book published in the “Success” subcategory of “Self-Help” between Aug. 31 and Nov. 28, 2025.

Due to the rate at which new content is published to Amazon’s platform, we set parameters for qualification. Each book must:

With those filters set, we scanned for multiple data points, including:

In addition, we reviewed three publicly available sections of text: the book’s summaries (also known as descriptions), author biographies and sample pages (also known as previews). All three text segments were bulk scanned by the latest Originality.ai model, Lite 1.0.2.

The resulting .xlsx file included columns for AI scores for each segment of text, and a TRUE/FALSE grade for whether that segment was likely written by AI.

When books’ bios or descriptions were under the 100-word minimum for Lite 1.0.2 to detect AI, our tool marked that field as N/A and moved on. When samples were unavailable or insufficient for scanning, the tool would occasionally skip those entries entirely, and other times leave the field blank.

We used Microsoft Excel to cross-reference and visualize all data.

Unlock the potential of AI-powered image detection! Explore its applications in biology, medicine, and environmental sciences. Learn how it accelerates research, aids early disease diagnosis, and enhances safety in autonomous vehicles. Discover the transformative impact of AI image recognition beyond academia.